At GTC 2026, NVIDIA didn’t just talk about DLSS or classic visual enhancements. The firm presented a much deeper and potentially disruptive approach: directly integrating neural networks into the heart of game rendering with the Neural Texture Compression. The objective is clear, to drastically reduce the weight of textures and accelerate the calculation of materials, two of the most expensive elements in modern graphics engines.

Neural Texture Compression: textures divided by more than 6 thanks to AI

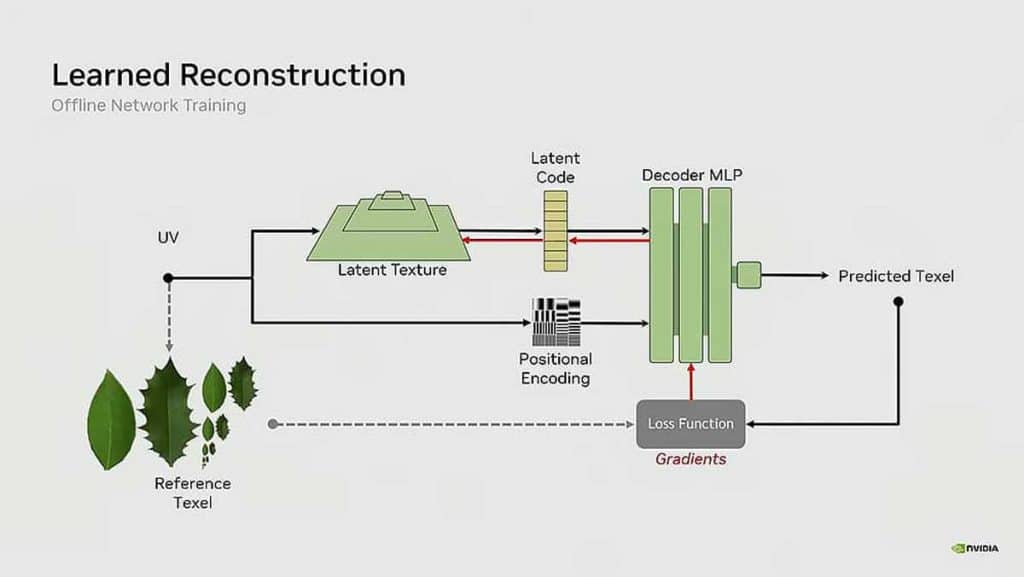

Where traditional methods like BCn store full compressed textures, NVIDIA offers a completely different approach: store a compact representation and let a small AI reconstruct the texture in real time on the GPU.

The gain is spectacular. In one scene shown, a classic version took up around 6.5 GB of VRAM. With neural compression, this same scene came down to just 970 MB. This represents a reduction of around 85%, a huge figure in a context where video memory has become a limiting factor on many recent games.

Beyond memory space, NVIDIA also claims to improve visual quality. At an equivalent memory budget, AI-compressed textures show fewer artifacts and retain more detail than traditional methods. For the gamer, this could mean lighter games, faster installs, and better graphics quality without an explosion in hardware requirements.

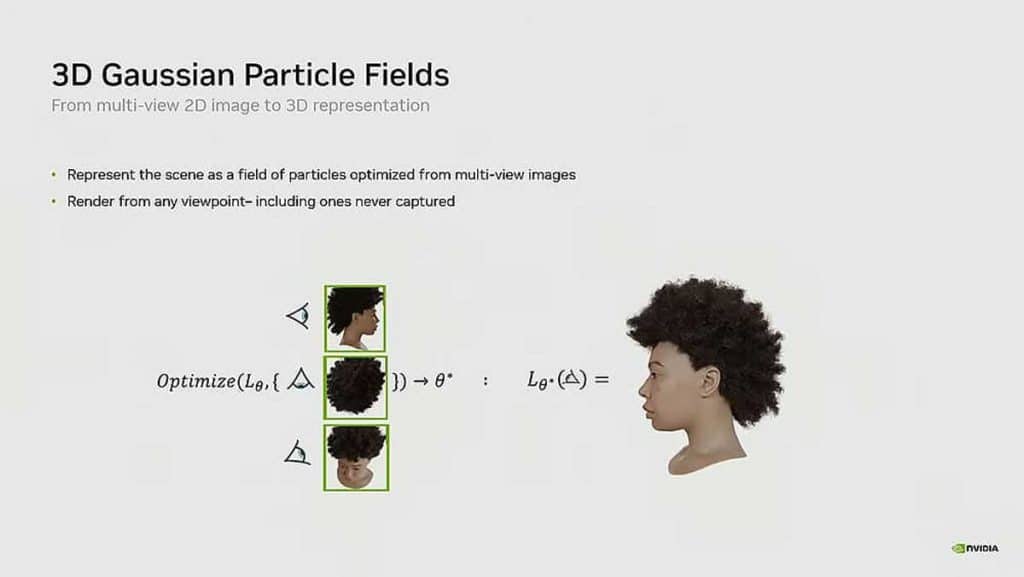

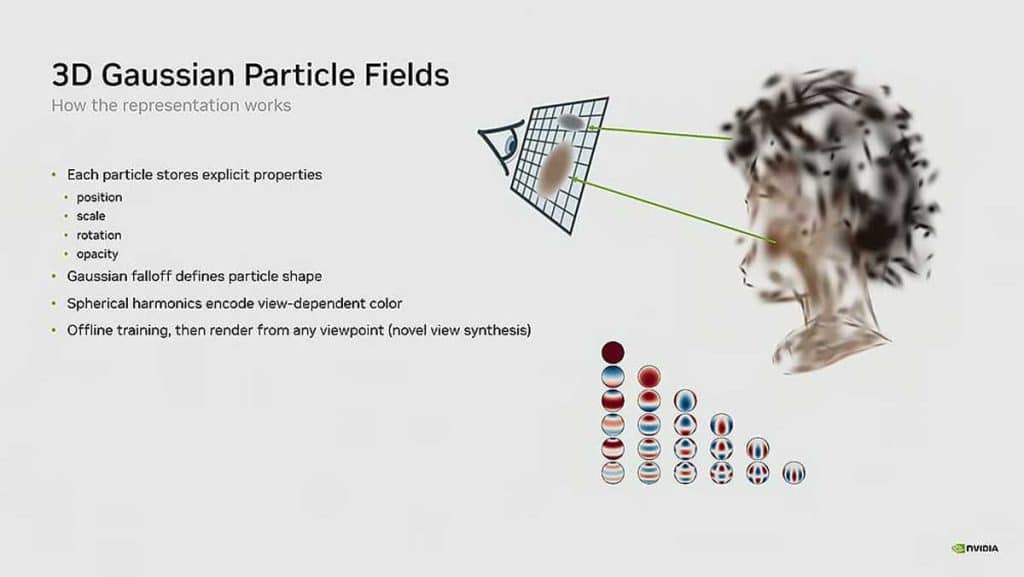

Neural materials to speed up rendering

The other innovation presented concerns materials, a key element of rendering which determines the way in which light interacts with surfaces. Today, these materials are based on complex and computationally expensive models, often composed of multiple layers and textures.

NVIDIA offers here an alternative with Neural Materials. The principle is similar: replace these complex structures with a compact neural representation capable of reproducing the visual behavior of the material with much less data.

In the demonstration, a traditional material using 19 channels is reduced to only 8 channels in its neural version. This simplification makes it possible to significantly reduce the computational load while maintaining faithful rendering.

The performance gains are particularly impressive. According to NVIDIA, rendering can be accelerated from 1.4x to 7.7x depending on the case, especially on complex scenes in real time. Even in the least favorable scenarios, the improvement remains significant and opens the door to much more detailed environments without sacrificing fluidity.

AI integrated directly into the graphics pipeline

One of the most important points of this approach is its direct integration into the rendering pipeline. Unlike technologies like DLSS, which intervene at the end of the chain on a 2D image, these solutions act upstream, at the very heart of image generation.

The networks used are deliberately simple, of the MLP type, in order to be able to be executed millions of times per image without exploding costs. They are designed to work directly on the GPU, notably leveraging Tensor Cores, which helps maintain high performance in real time.

Towards less demanding games… or even more ambitious

With these technologies, NVIDIA is not simply seeking to improve what already exists, but to redefine part of modern graphics rendering. By reducing memory consumption and computational cost, developers could either ease hardware requirements or push the level of detail and complexity of games even further.

A major unknown remains: the use that will be made of it. As is often the case, these gains could be used to optimize performance… or to further increase visual ambitions, thus maintaining pressure on the hardware. One thing is certain, with Neural Texture Compression and neural materials, NVIDIA is already paving the way for a new generation of graphics engines.

Â